Below are some of my key takeaways from reading the book, Thinking, Fast and Slow by Daniel Kahneman. If you are interested in more detailed notes from this book, they are available here.

Summary:

A brief synopsis of the book is reprinted below from Amazon.

In his mega bestseller, Thinking, Fast and Slow, Daniel Kahneman, world-famous psychologist and winner of the Nobel Prize in Economics, takes us on a groundbreaking tour of the mind and explains the two systems that drive the way we think. System 1 is fast, intuitive, and emotional; System 2 is slower, more deliberative, and more logical. The impact of overconfidence on corporate strategies, the difficulties of predicting what will make us happy in the future, the profound effect of cognitive biases on everything from playing the stock market to planning our next vacation―each of these can be understood only by knowing how the two systems shape our judgments and decisions.

Engaging the reader in a lively conversation about how we think, Kahneman reveals where we can and cannot trust our intuitions and how we can tap into the benefits of slow thinking. He offers practical and enlightening insights into how choices are made in both our business and our personal lives―and how we can use different techniques to guard against the mental glitches that often get us into trouble.

Insights:

Two Systems of Thinking

One of the core ideas of this book is that humans have two primary processes for thinking, System 1 and System 2.

System 1 operates automatically with little effort or sense of voluntary control. System 2 is in charge of self-control and is typically defined by operations that require attention and are disrupted when attention is drawn away.

The main function of System 1 is to maintain and update a model of your personal world, which represents what is normal in it. Most of the time, System 1 processes the world, generating suggestions for System 2 in the form of impressions and intuitions. System 2 adopts the suggestions of System 1, turning these impressions and intuitions into beliefs, impulses, and ultimately voluntary actions.

Most of what we think and do originates in System 1. However, System 2 can be activated if an event is detected that requires the more detailed and specific processing available in System 2. System 2 takes over when things get difficult, and normally has the last word.

System 1 has biases, systematic errors that it is prime to make in specified circumstances. In order to handle these deficiencies, we must learn to recognize the situations where these mistakes are likely and try to avoid significant mistakes in situations when the stakes are high. While it’s easier to recognize the mistakes in other’s behavior rather than our own, this book outlines some of the common fallacies and ways to recognize them.

The Good and Bad of Influence

One of the themes of this book is the “law of least effort” which says that the mind will naturally gravitate to the least demanding of several courses of action. This idea can be used to influence an audience and craft a more persuasive message.

The author provides some tactical guidance for crafting a persuasive report or presentation. The core idea is to reduce the cognitive strain on the audience so that they can be focused on the core message. This includes a focus on legibility taking into account font, font size, and the layout of information. This reinforces an idea that the visual aspects of a presentation should be given as much focus as the content itself to maximize the impact of the presentation.

The author also describes how this concept can be used to spread misinformation. He states that a reliable way to make people believe in falsehoods is frequent repetition. It’s hard for the mind to distinguish familiarity from truth so if a person can’t remember the source of the statement or compare it to other things they know, they go with the sense of cognitive ease and believe the statement is true. The internet enables anyone with a message to develop and distribute it easily in a digital medium. It’s important to be aware of this mental trap as the mind is forced to process a constant stream of information.

What We Experience and What We Remember

One of the other concepts explained by the author is the conflict between how we experience an event and how we remember it. Ultimately, how we remember an event is what we keep after an event, even if it is wrong. This incentivizes us to maximize the quality of our future memories and not necessarily the experiences themselves. This is reflected in how many people evaluate vacations, by evaluating the stories and memories we expect to share. When reflecting back on events, we often ignore the duration of an event and emphasize the peak and end of the event. So does that mean we shouldn’t try to be more present and aware, to appreciate events as they happen if we will only remember a caricature of the event in the future? I don’t think so. If we are prone to remembering the peaks and the ends, we should strive to make those as high as possible.

The author also extrapolates this to describe how some aspects of life have a greater impact on how we evaluate our lives than on how we experience them. Being wealthy improves the evaluation of our life, but not necessarily how we experience life. A higher income, potentially, reduces our ability to enjoy the small pleasures of life. As you gain the ability to buy more pleasurable experiences, you lose some of your ability to enjoy the less expensive ones. This could also be combined with the idea of hedonic adaptation where we return to a base level of happiness despite major events or changes. As we make more money, our expectations and desires also rise.

According to the author, the easiest way to increase happiness is to control how you use your time so that you can find more time to do the things you enjoy doing. This also reminded me of a quote from Naval where he says,

A calm mind, a fit body, and a house full of love. These things cannot be bought. They must be earned.

Perceiving the World

Kahneman had a phrase that really struck me as obvious in hindsight but something I hadn’t really reflected on previously:

A remarkable aspect of your mental life is that you are rarely stumped. The normal state of your mind is that you have intuitive feelings and opinions about almost everything that comes your way.

The author describes the role System 1 plays in this false notion of understanding. System 1 maintains and continually updates our own personal model of the world. The model is developed by associations that link ideas of circumstances, events, actions, and outcomes. As these links are strengthened over time, this pattern or ideas determines how we interpret the present and our expectations of the future.

This process has an overwhelming impact on our lives but it is built on a questionable foundation. System 1 is constantly making these links effortlessly and automatically but sometimes the connections between events aren’t appropriate or correct. System 1 suppresses any ambiguity or doubt and constructs stories that are as coherent as possible, even if they aren’t true.

This need to see the world as simple, coherent, and predictable creates some comfortable illusions. Believing we can understand the past makes us think we can predict and control the future. We want to believe that actions have appropriate consequences and don’t want to acknowledge the uncertainties of our existence.

As a result, we underestimate the role of chance in events and overestimate how much we understand about the world. As the author says, “We are far too willing to reject the belief that much of what we see in life is random.”

Regression to the Mean

One of the primary examples of our misguided efforts to apply causal explanation to random events are regression effects. The idea is that given a random sampling of events, outliers will be rare and most of the events will be near the average of the sample.

When we observe an extreme event followed by a more expected event, we tend to apply a causal explanation to it instead of recognizing that this sequence is more of a mathematical consequence due to the role of luck.

We tend to ignore the role of luck in successful outcomes. Success is a combination of talent and luck. The author states that great success is a combination of a little bit more talent but a lot of luck. We tend to focus on the causal role of skill and talent and neglect the role of luck. This results in an illusion of control.

Recognizing Uncertainty

This natural tendency to ignore the role of luck in the outcome of events results in an illusion of understanding. We construct and believe coherent narratives about the past while focusing on what we know and ignoring what we don’t. This makes us overly confident in our beliefs and hinders us from recognizing the limitations of our ability to estimate the future.

One framework for better decision making takes advantage of the mathematical principle known as Bayes’ theorem. This theorem specifies how people should change their mind in the light of new evidence.

The first concept is to anchor your judgment of the probability of an outcome on a plausible base rate. Next, take the new evidence and adjust the probability of the outcome from the base rate given the evidence. The key here is the importance of base rates even in the presence of evidence about the specific case.

Our intuitive impressions based on the evidence are often exaggerated. Since there is more luck in the outcomes of small samples, you should regress your prediction more towards the mean than you would otherwise.

This can be applied to planning as well. We often fall victim to the planning fallacy where our estimates are close to a best-case scenario more than a realistic assessment. The idea is to create a baseline prediction that should be the anchor for future adjustments. This baseline prediction should be made by consulting statistics from similar projects in the past. This “outside view” shifts the focus from the specifics of the current situation to the statistics of outcomes in similar situations.

While the planning fallacy is meant to counter exaggerated optimism, we also need to counter exaggerated caution as a result of loss aversion. To do so, we should construct risk policies that can be routinely applied whenever a relevant problem arises. This prevents us from constructing a new preference every time. We can reduce the pain of the occasional loss by the thought that the risk policy we made, assuming it’s correct, will result in positive outcomes over the long run.

The author specifically mentions this in the context of investing, and it reminded me of Ray Dalio’s use of principles that he and his team used to define their investment strategies. They applied the concept to encompass a wide variety of decision making at their company. To counter hindsight bias, we should assess the quality of a decision on the process that was used, not if the outcome was good or bad. By defining and iterating on the decision making process, we can improve our chances of making the right decision over time and be better positioned to handle the losses that will occur when making decisions in uncertainty.

Group Decision Making

Much of our lives today is spent working in and with teams. Kahneman provides some suggestions to improve decision making involving groups of people to reduce some of the sources of group think.

First, he states that the proper way to elicit information from a group is for one person to collect each person’s opinion individually, rather than starting with a public discussion. This made me think about the modern collaboration tools such as Google Docs. They allow everyone to see each other’s comments but there isn’t really a way to provide comments independently to start and then reveal them. One alternative is for the collaboration to be synchronous instead of asynchronous. This reminded me of a tweet from Andy Raskin where he makes meeting participants write their opinions down at the start of the meeting before they are shared with the group. The act of writing also forces focus and concision, which is why Amazon famously uses written documents to drive meetings instead of presentations.

The other pitfall of groupthink occurs when a decision appears to be made. When a group is close to a decision, especially if the opinion of the group leader is clear, it no longer becomes acceptable for individuals to raise doubts about the decision. Doubt is suppressed and viewed as disloyalty to the group. This results in a sense of overconfidence in the group where only supporters of the decision have a voice.

To counter this, the author recommends that groups run a pre-mortem, described by Gary Klein. Typically, groups run postmortems or retrospectives at the conclusion of a project to capture lessons learned. The idea with the pre-mortem is for everyone to think about how the project could fail before it starts. This process legitimizes doubts and encourages supporters of the decision to think of risks they haven’t considered earlier. For more information on how to run a pre-mortem, check out “How to Use Pre-mortems to Prevent Problems, Blunders, and Disasters” by Shreyas Doshi.

Prospect Theory

Another major contribution to the field of behavioral economics was prospect theory which describes how people will respond to gains and losses when making decisions under uncertainty. The previous theory, utility theory, the utility of a gain or loss didn’t change in magnitude, only in it’s sign. Prospect theory introduced the concept of a reference point relative to which gains and losses are evaluated.

Prospect theory also introduced the concept of loss aversion, where losses loom larger than gains when directly compared to each other. According to the author,

Loss aversion is powerful conservative force that favors minimal changes from the status quo in the lives of both institutions and individuals. It is the gravitational force that holds our life together near the reference point.

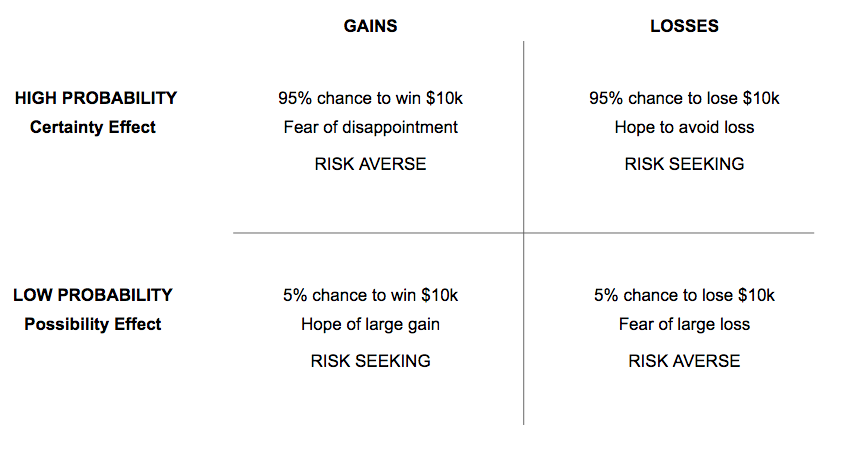

The theory also talks about the impact of extreme probabilities. Unlikely outcomes are weighted disproportionately higher than they deserve while outcomes that are almost certain are given less weight than their probability justifies. Choices between gables and sure things are resolved differently depending on the outcome. People prefer the sure thing over the gamble (they are risk averse) when the outcomes are good. They tend to reject the sure thing and accept the gamble (they are risk seeking) when both outcomes are negative. The chart below depicts the common scenarios.

Kahneman describes some of the issues that arise from the top right cell specifically. Instead of cutting their losses, people take a desperate gamble. They accept a high probability of making things worse in exchange for a small hope of avoiding a large loss. The thought of accepting the large loss is too painful, and the hope of complete relief is too enticing.

How Economic Theory Drives Government Intervention

Kahneman also identifies some short comings in core assumptions of basic economic concepts. Specifically, that assumption that people are rational and don’t make foolish decisions is a central role in basic economic theory.

As discussed above, people are often guided by emotions from the perceived gain or loss associated with a decision. We assume that people want what they will enjoy and enjoy what they choose. However, based on the results of several experiments, logically equivalent statements evoke different reactions from different people. The book challenges the idea that people have consistent preferences and know how to maximize them.

The author then discusses the role of government based on the validity of this assumption that humans are rational decision makers. This forms the basis of libertarian policy where the government should not interfere with the individual’s right to choose unless the choice harms others.

To others, this freedom has a cost. People inevitably make bad decisions, and society feels obligated to help them. Additionally, people may need protection from others who deliberately exploit their weaknesses. This leads to the idea of libertarian paternalism, where the government is allowed to incentivize people to make decisions that serve their own long-term interests.

There are other historical consequences associated with an increased amount of freedom. In “The Lessons of History” Durant describes how more freedom results in an increase of inequality. People have different abilities and free people make different choices. Different choices result in different outcomes, which results in wealth accumulating into a minority of people. Therefore, freedom and liberties get curtailed and wealth is redistributed, typically through government, to limit the growth of inequality.

The economic philosophies of socialism and communism use the government to try to insure everyone has equal outcomes, at the cost of freedom of choice. Capitalism takes the opposite approach, assuming that the capital that businesses create will be reinvested in the business in the pursuit of more capital. Here, government intervention is required to ensure that the profits are gained in a fair way and distributed in a fair manner. Naval

Ultimately, it should be the goal of society and a function of government to try and provide everyone with equal opportunities. Durant suggests focusing on improving the justice and education systems to improve equality. Marc Andreesen argues that healthcare, housing, and education are the core components of a thriving middle class and are currently hindered by the level of government involvement in those industries. It’s a balance and there are tradeoffs to each option but in the end, society and government should always try to give people equal opportunities.

Human Decision Making or Machines?

Since there are so many fallacies humans can fall victim to when making decisions, many of them without the human aware of the trap, should we relegate decision making to machines? The author cites several studies which show that humans are inferior to prediction formulas, even when they are allowed to view the output of the formula. Humans feel that they have access to more information than the formula, and therefore feel like they can overrule it when necessary. Despite this, the human is wrong more often than not

The author describes two reasons why statistical algorithms outperform humans in low validity environments which are characterized as having a significant degree of uncertainty and unpredictability:

- algorithms are more likely than humans to detect weakly valid clues

- algorithms are more likely to maintain modest level of accuracy by using such cues consistently

The author then describes a structured interview process that he developed to take advantage of the idea that simple statistical rules are superior to intuitive judgements. The process involves evaluating a candidate subjectively on a specific set of personality traits and score them separately. This closely resembles the method described by Geoff Smart in the book Who as well as Ray Dalio in Principles.

There are, however, some decisions which are still better left to humans as opposed to algorithms. The field of Machine Learning is based on the concept that algorithms can be developed to solve prediction problems and their performance improves overtime as they are given access to more and higher quality data. Kahneman appears to be describing regression, where the output is numerical or continuous. The other category of Machine Learning is known as classification where the output is categorical or discrete. An example would be developing an algorithm to classify images. Significant progress has been made in this field and the autonomous vehicle industry is one that is pushing the limits of an algorithm’s ability to perceive the world. Today though, humans are still better at operating vehicles than an algorithm.